Why I Don’t Show Game Play For Benchmarks

I’ve been sharing game benchmarks on laptops and PC hardware for years, but why do I always use graphs instead of showing actual game play?

Here’s what it comes down to: Graphs are a more efficient way of displaying lots of information in an accurate manner that can be fairly compared.

Let’s break this down.

Graphs are a more efficient way of displaying information

This is literally the point of a graph after all, to concisely display information. It is much easier and faster for the viewer to take away the results from a graph than from watching game play.

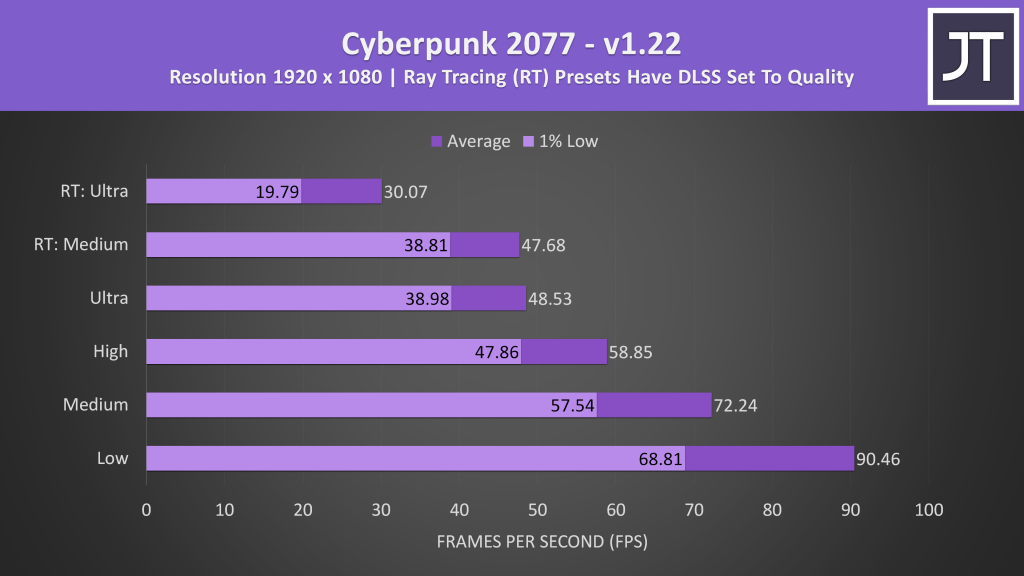

Take Cyberpunk 2077 for example. I test this game at 6 different setting presets on all gaming laptops. Each setting preset is tested 3 times for 90 seconds each, so once you include reloading the game after each test, we’re looking at about 30 minutes of testing time to produce the graph below:

I honestly doubt anyone is going to want to sit through ~30 minutes of game play to see the same results. Even if I included all 6 setting presets on screen side by side, that’s still 270 seconds if I was to show all three test runs. Now you could argue that showing just one test run is fine. That’s still 90 seconds, longer than it takes to digest the graph above, but sure, much more reasonable than half an hour.

Graph data is easier to fairly compare

There’s a reason averages from 3+ test passes are taken. It will vary by game and may even depend on the hardware to some degree, but there’s always run to run test variance.

You’re not going to accurately pick up this sort of thing watching game play. Good luck watching multiple three side by side videos and doing the math on the constantly changing average and 1% low FPS values.

Showing the average values in graphs makes it more accurate when comparing, as we’re looking at data from multiple test runs.

Recording game play can affect results

The act of screen recording requires system resources. By screen capturing game play you may be inadvertently taking away CPU/GPU/RAM/Storage resources that could otherwise be used by the game to perform better – inaccurate results.

Many screen recording options such as Nvidia ShadowPlay use a surprisingly small amount of resources these days, and often the results can be negligible, however this still adds overhead compared to not doing it at all.

Bypassing this with an external capture device might actually make things more misleading, especially when it comes to laptops. This is an entirely different topic, but essentially many laptops have Optimus, and this can be bypassed if an external display output port connects directly to the laptop’s discrete GPU. This is the same reason that using an external screen on a gaming laptop can significantly boost FPS.

Other common concerns

These are some of the arguments I often hear against graphs in favor of seeing game play.

Watching game play lets me experience how the game will perform

Given YouTube videos are limited to 60 FPS best case, while 30 FPS is still more common, you’re not actually experiencing the game play through the video in the same way as actually playing it.

This is especially true with high refresh rate panels and games that can hit higher frame rates.

But you can just fake the graphs!

Some people argue that the data in my graphs can be faked, as opposed to seeing the game play for themselves. This is a pretty weak argument in my opinion.

Firstly, if my data was faked, there wouldn’t be countless other people (both other reviewers and viewers alike) reporting similar results over the years. Yes, collecting the data takes a lot of time, but it’s essentially my job and I enjoy doing it. It’s not difficult and I have access to hardware, there’s no need to fake it and lose the trust of the viewer that I’ve established over the years. In short, there’s nothing to gain.

Secondly, it would be quite easy to fake game play as well. Just because you can see a screen capture doesn’t mean you know all the details about the hardware it’s being run on. Sure, it would be harder to fake if you’re literally pointing a camera at a laptop, however the previously made points still remain.

At the end of the day, in either case there’s a level of trust you’re giving to the reviewer, so let the data they’ve produced over time speak for itself.

Your results don’t match mine, so you must be faking them!

It’s important to understand that you’re often not going to be able to directly compare your test results with mine, or any other reviewer for that matter.

Take Fortnite for example, the performance in that game will depend entirely on where in the map the test takes place and what other players were doing during the test run. Ideally, a reviewer will attempt to test in the same place so that results are comparable within their own content. Personally I do this using the replay feature so that the same scenario can be consistently tested.

This means that unless you know where in the game I generate my replays, you cannot directly compare your results between mine, or my results with any other reviewer.

I have considered screen recording the test runs I do through all of the games I benchmark and providing them somewhere on this website, which would help others in comparing to my results, but this is not something I’ve yet completed.

You can only really compare directly between others by using built in benchmarks that are provided by some games, as these attempt to perform the same reproducible test run.

This is why I choose to test a mixture of games that offer built in benchmarks so that viewers may attempt to see how their hardware compares, as well as general game play, as this often better reflects actual performance compared to many of the game benchmarks out there.

Additionally, when I test all setting levels in a game, these are done from lowest first up to highest in the same session. I often get people saying that their laptop performs better in say the Shadow of the Tomb Raider benchmark at highest settings after running one or two test passes. By the time I’m getting to highest settings, the test has already been run multiple times at lower settings and warmed up the laptop, as opposed to running it from a cold start. This matters much more when it comes to laptops compared to a desktop PC, as thermal and power limits are more constrained, and it can definitely affect results.

I test all laptops this way while most others do not, so for this reason alone I would only consider my benchmark data comparable within my own content. I feel this better represents how you’d actually play a game, after heat saturation kicks in, not for just a few minutes after doing nothing.

Is game play useless?

I’m not saying people that show game play are wrong or that there’s no place for that sort of content. I am just giving the reasons why I personally prefer to show my data visually through graphs.

In my opinion, the main benefit of watching game play is so that you can see how the frame counters, clock speeds, power limits and resource usage metrics look in real time with an overlay such as MSI Afterburner.

Personally I prefer to test a single game when collecting thermal data for comparable results, and again I show these in graph format, but I can see why the above information would be appealing.

Regardless, for the reasons outlined above, I will continue using graphs to display the game data that I collect. If you still want to see actual game play, then my videos are not the content you’re after.

23 Comments

SUNNY SAURABH

Bro plzz review dell g15 5510 r5x3050ti + 5800h, is it worth buying or go for legion 5i?

Jarrod

Haven’t tested either of those so can’t fairly compare at this time.

SUNNY SAURABH

Please make video on best rtx 3050 and 3050ti laptops in market whenever possible, I think there are lots of people looking for this budget friendly area!

Emilio

Everything said in this article makes a lot of sense. I think it speaks the success of your channel and the type of audience you’ve amassed over the years. If anyone wants to see gameplay on a specific laptop or processor type, there are dozens of other videos that do that.

When I click on one of your videos, I know I’m going into a sea of information that’s shown in a way where I can easily make sense of it. Great explaination as to your methods and keep up the great work!

Jarrod

Cheers!

Jaden

Hey Jarrod,

I put my commen down here, because this is the latest article I found.

European Legion 5 Pro users are having a really big problem. You may have heard that on some labtops (well, basically all laptops in central Europe) Lenovo disabled h.264 encoding without telling the people about it. This hurts people like me especially creative ones like you, because Software like OBS is unusable (or just with CPU).

We need your help. Can you test, if your Lenovo Legion 5 Pro has this disability? Just by eg trying to screen-record with OBS and NVENC activated. If you don’t get any errors would it be possible to try a fix by sharing your bios file with us?

An if you get errors could you bring people’s attention to it to warn potential buyers also as to bring a little pressure on Lenovo.

We really don’t know what to do here, so please help us.

Thankfully, Jaden.

Jarrod

I have heard, mine is fine but I bought in Australia, I do not know to what regions they are limiting this. I am just using the latest BIOS available via Vantage software. I don’t suppose there’s a whole lot you can do until Lenovo resolves the issue if you can’t get that anywhere else. Maybe VPN to US and search their download site perhaps.

Kareem Al-Saudi

Hey Jarrod!

Will you be doing a review on the upcoming Legion 7i? Would be interesting to see how it fares against its AMD counterpart.

Jarrod

I do not know at this time, Lenovo know I want the Intel models but they haven’t been able to get any to me.

Jason

Personally I like the graphs. It’s one of the things that make your reviews trust worthy and less speculative.

Would you consider making some of your laptop benchmarks available on your website? It would be nice to come and loom at which laptops perform best on games, which have the best average fps per $, etc.

Jarrod

Yeah at some point I’d like to, it’s just a time / data entry thing.

Tut Tut

Hey Jarrod, great explanations as I have seen people who are skeptical about this.

For your latest nitro 5, I have a few questions that confused me and since this is the latest post as of now I wanted to ask you why is the ryzen nitro 5 running absurdly hot compared to the intel model as it ran hotter at about 10W less.

As my nitro 5 running with the 5800H and same 3060 runs at more similar temps and powers compared to the i5 model? Also, the latest v1.08 bios can cause thermal issues too so maybe the previous 1.06 bios would work better. I am quite certain that it is a thermal paste issue as I have seen others having much better temps for their ryzen models. Thanks in advance to a great Reviewer.

Tut Tut

Hey Jarrod, great explanations as I have seen people who are skeptical about this.

For your latest nitro 5, I have a few questions that confused me and since this is the latest post as of now I wanted to ask you why is the ryzen nitro 5 running absurdly hot compared to the intel model as it ran hotter at about 10W less.

As my nitro 5 running with the 5800H and same 3060 runs at more similar temps and powers compared to the i5 model? Also, the latest v1.08 bios can cause thermal issues too so maybe the previous 1.06 bios would work better. I am quite certain that it is a thermal paste issue as I have seen others having much better temps for their ryzen models. Thanks in advance to a great Reviewer and what paste would you suggest for a repaste for longevity and minimal pumpout

Tut Tut

sorry for the double post, it did not confirm the first time, and I acidentally reposted.

Jake

Hi Jarrod

I’ve looked at most of your reviews on the xmg laptops and were great, so thought I’d ask a question about them, but the 2021 models, from what I can see the Australian version of the xmg neo 15 is the infinity w5, and I’ve been comparing them to the aorus 15p to try and pick the better one.

I’m looking at the AORUS 15P Xd and the Infinity W5 11R7N-888

The seem to have the same specs and chipset, so would mainly come down to build quality, thermals and then the 1080p vs 1440p screen

Do you have any opinions on these?

brokenaimbot

Hi Jarrod there is currently a big thread on lenovo support forums about some microstutter issues for the legion 5 pro 5800h and last years 4800h ryzen models.

https://forums.lenovo.com/t5/Gaming-Laptops/Legion-5P-15ARH05H-micro-stutters/m-p/5067079?page=1

I want to ask if you personally have experienced these issues completely at random during normal use regardless of what tasks are running or even when the system is idle.

A video of a microstutter event is captured here and happens 5 seconds into the video https://www.youtube.com/watch?v=8qvWrH3HBHQ

Further more the issue can be reproduced on demand as shown in the video

https://www.youtube.com/watch?v=qNFfb7uFwDQ

and how it appears externally to the user

https://www.youtube.com/watch?v=TAIofiKw3DQ

In my case the issue continues persisting after lenovo repairs and lenovo are refusing to acknowledge the issue and provide a refund. After seeing your video with the known problems with the stock ram I wonder if you could provide some exposure to this issue.

before first repair https://www.youtube.com/watch?v=IWVRCXiI08o

after first repair https://www.youtube.com/watch?v=MO_x2WBhH1A

after 2nd repair https://www.youtube.com/watch?v=ZF8ImR19wVY

I’m unable to produce the same stutter issues with the vantage software on my thinkpads so I think it’s fair to say this shouldn’t happen with the vantage software.

https://www.youtube.com/watch?v=Rpdu7uSoa1I

A lenovo moderator on the forum has even admitted the issue exists

https://forums.lenovo.com/t5/Gaming-Laptops/Legion-5P-15ARH05H-micro-stutters/m-p/5067079?page=20&clickid=yVBQuB0RyxyIRwNxiAS6PRWLUkBWG8TFDydrXQ0&irgwc=1&PID=121977&acid=ww%3Aaffiliate%3Abv0as6#5408320

Jarrod

No, though if it were happening in mine would it not show in the 1% lows compared to other laptops I test? I suppose depends how “micro” it is, as I don’t report 0.1% low for instance. As for the super obvious ridiculous examples you’re showing in the first video, absolutely not, never seen such a thing on any machine. Perhaps it is a h.264 decode issue, if I recall that was taken away in some countries in a BIOS update.

Brokenaimbot

The music and video desktop captures are just to emphasise the effect as is the reproduction of the stutter. No one is suggesting inducing the effect on purpose is normal use. As I’ve said the stutter events are completely random during normal use and can last a fraction of a second to a full five seconds or more regardless of of task or system load and typically occur 3 or 4 times a day.

Some people have been reporting this since last year for a wide range of graphics or cpu hardware specifications for the legion range. It seems to persist across all BIOS versions before the recent decoder/encoder debacle.

Noel

Hello.

I watched your Laptop vs Desktop 3060 video on YouTube today. And have to completely agree.

I live in Japan. I puchased a rebranded Tongfang 15.6″ 144Hz Laptop from DOS Para ( Thirdwave Computers ) here in Osaka.

———————————————————————–

Specs on Purchase were:

Intel i7 10875H ( 2.30 – 5.10Ghz ) 8C/16T

32Gb (2x 16Gb ) Samsung 2933 So-Dimm

512Gb Phison NVME Gen 3

180W Power Supply

175,000yen

I am into gaming and also some creative work.

———————————————————————–

I upgraded it by adding:

64Gb Crucial CT2K32G4SFD832A [SODIMM DDR4-3200 PC4-25600 32GBx2 ]

and

SAMSUNG 980 PRO MZ-V8P2T0B / IT 2TB NVME Gen 4

Plus an External SAMSUNG 870 QVO MZ-77Q8T0B / IT 8TB 2.5 inch SATA SSD

This brought the total to 300,000yen

———————————————————————–

Well worth it.

Glad to see test results backing up my purchase.

Better than any Desktop PC I have owned..!!

( Previously an i7 950, 12Gb DDR3 Ram, 4Tb 7200Rpm Seagate, GTX 980 based Desktop )

Keep making great videos on YouTube.

Jarrod

Good to hear!

Tim

Hey Jarrod, I am thankful for the reviews you have made on the TUF A15 and nitro 5 2021 models. In my country, they are both the cheapest 3060 laptops by atleast $400. Both of them have the 5800H and 3060 95W with 16gb of good ram and 144hz. I dont really care about battery life. What would you recommend between the two considering they are the same price. Thanks a lot in advance, love all your videos

Jarrod

Difficult to say without trying both with same specs myself, unfortunately I still haven’t been able to get the 3060 model, if I do I’ll probably do a dedicated video comparing the two. I guess if those are the only choices though based on my own experience I’d probably be leaning towards the TUF over the Nitro.

TIM

Hmm how the tables have turned, although I feel like the 144hz screen would be better on the nitro and i guess you recommended cuz of slightly better build and good stock ram. Tho the thermals are better on the nitro. Thanks for the HELP!