Laptop Latency Comparison – Intel vs AMD vs Optimus

There’s a delay between the time when you click your mouse in a game and when the event actually fires on screen. This is known as the end-to-end system latency, so is there a difference between Intel and AMD gaming laptops? I’ve tested two laptops with and without Optimus enabled to see what the differences are.

What Is End-To-End System Latency?

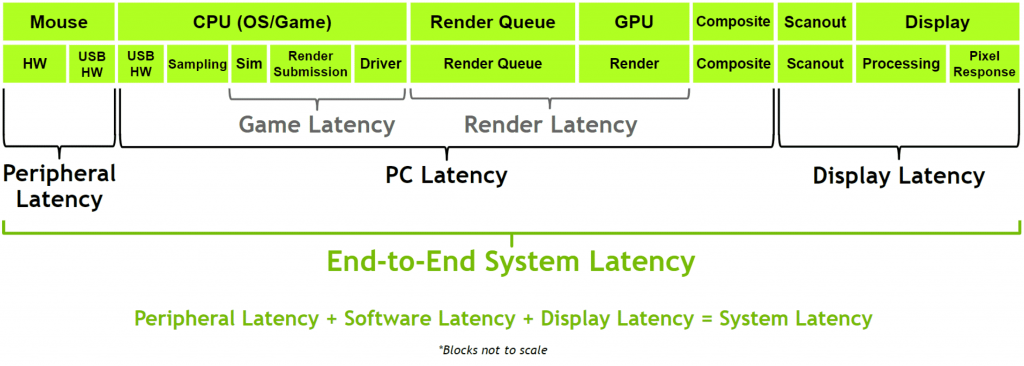

Here’s how Nvidia defines end-to-end latency:

Basically it includes all links in the chain between the input device (mouse) and output (display screen). As we can see, there are many steps in between that could be dependent on the underlying hardware, which is why I want to compare Intel and AMD laptops.

What Is Optimus?

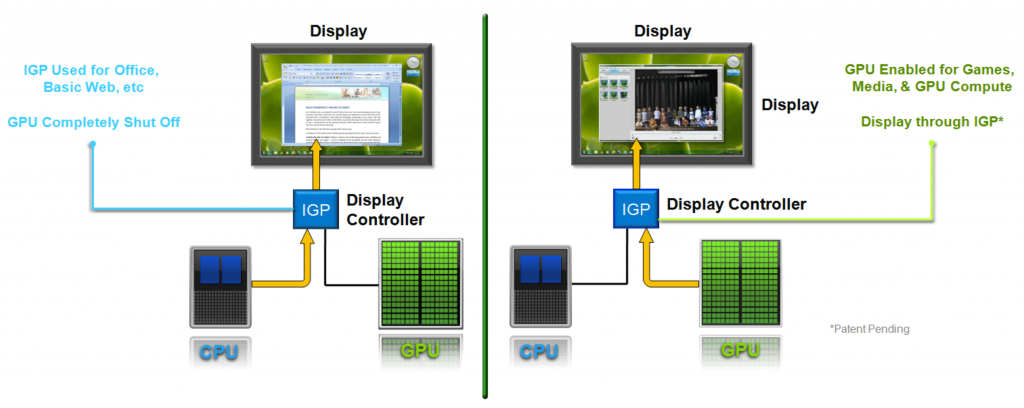

I also want to find out how much of a delay Optimus adds. The diagram below shows how Optimus works in laptops.

Basically when the Nvidia discrete graphics is rendering a game, the frames are first sent via the integrated graphics of the processor before reaching the display.

Some laptops have a MUX switch, which gives you the option to disable Optimus after a reboot. Upon doing this, the Nvidia discrete graphics will be connected directly to the display.

Based on the fact that Optimus literally has an additional link in the chain, I’m expecting the total system latency with it enabled to be higher.

Testing Goals

With that understanding in mind, here are two main goals for the latency testing in this article:

- What is the total system latency difference between Intel and AMD gaming laptops?

- How much of an overhead does Optimus add to each?

Testing Tools

I’ve done the testing using Nvidia’s LDAT (Latency Display Analysis Tool):

Basically this sits on the screen and measures the latency of the entire laptop.

The LDAT tool is placed on a section of the screen in a game that will change in luminance when a mouse click occurs. In this test, I’ve used CS:GO at 1080p with all settings set to minimum using the instructions provided by Nvidia in their LDAT user guide.

The LDAT tool contains a luminance sensor and a physical button which initiates a mouse click. It connects to the laptop via USB cable, and there is software running on the laptop which measures the amount of time between the “mouse click” and when the content on screen changes in luminance. In the CS:GO example above, a mouse click instantly fires and the section of the screen changes from black.

The software makes it easy to perform multiple tests. I have tested each laptop configuration 100 times and taken the averages.

Laptops Tested

All testing was done with the XMG Neo 15, a Tongfang chassis, aka Eluktronics Mech-15 G3. Both laptops were tested with the exact same 16gb DDR4-3200 CL22 dual channel memory kit, both have RTX 3070 graphics with the same power limits (125-140W), and both have the same 1440p 165Hz screen (BOE0974).

Both laptops were also tested with their highest performance modes with fans set to maximum speed with a cooling pad for best results.

It’s important to note that despite both laptops having the same model of panel, there will still be small a difference in the response times. I could have used the same external monitor to rule out this difference, however that would bypass Optimus and not achieve our 2nd goal above.

Total System Latency In CS:GO

These are the total end-to-end system latency times from the testing. We’ll start with Optimus enabled, followed by Optimus disabled.

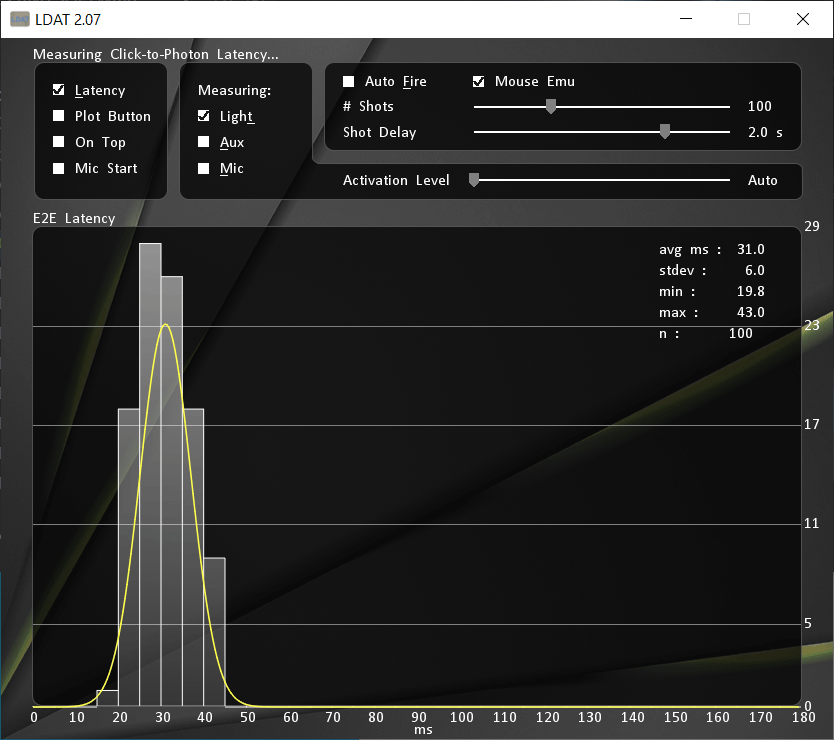

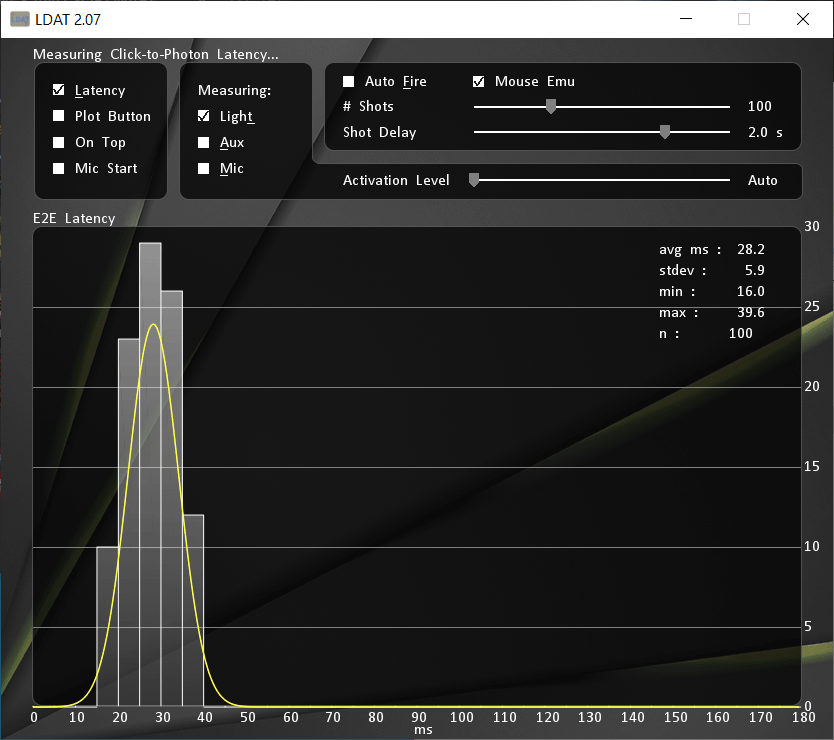

Optimus Enabled

With Optimus enabled, the AMD laptop is 0.5ms faster on average. What I found more interesting was that the standard deviation on the AMD laptop was lower, so out of the 100 test samples, the AMD laptop was offering more consistent results.

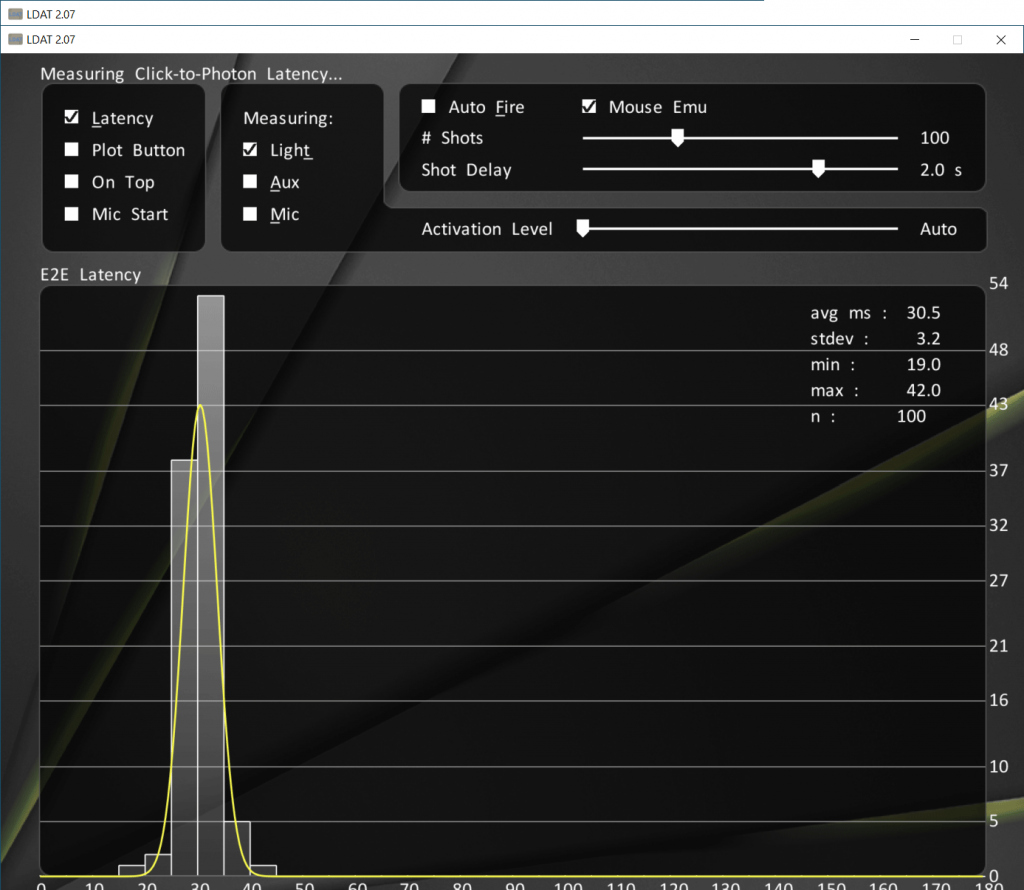

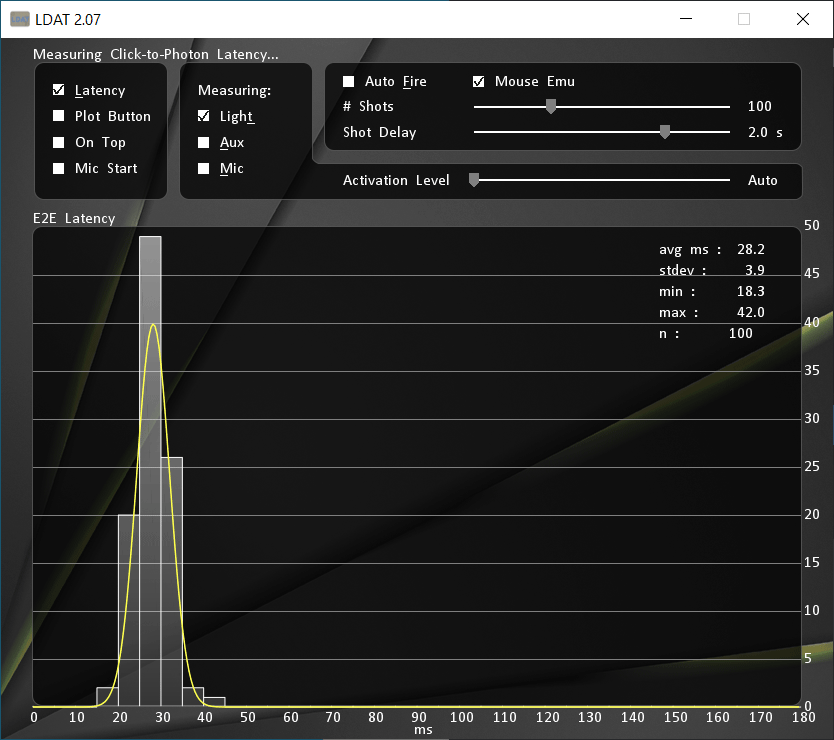

Optimus Disabled

Let’s see how things change with Optimus disabled.

The total latency lowers with Optimus disabled, and although both laptops now have the same average latency time at 28.2ms, the AMD system still has a lower standard deviation and thereby more consistent results.

It’s important to note that not all laptops offer the ability to disable Optimus, however it appears that this is another benefit of the feature. I’ve already shown that disabling Optimus can boost FPS in games, now we know it can improve overall latency too.

An Easier To View Summary

I’ve summarized the total end-to-end system latency from each configuration in the table below for easier viewing:

| AMD Ryzen 7 5800H | Intel i7-10875H | |

|---|---|---|

| Optimus Enabled | 30.5ms | 31.0ms |

| Optimus Disabled | 28.2ms | 28.2ms |

Optimus enabled is adding more latency. This makes sense, given the display signal from the Nvidia discrete graphics must first go via the integrated graphics before reaching the screen.

With Optimus disabled, the integrated graphics are bypassed and the Nvidia GPU outputs directly to the screen.

Both laptops were averaging 28.2ms with Optimus disabled, however the Intel system was slightly slower when both had Optimus enabled.

Based on these results, we can say:

- Total AMD laptop latency increases by 8.16% with Optimus enabled

- Total Intel laptop latency increases by 9.93% with Optimus enabled

Conclusion

Although I have made an effort to use two laptops that are as close to being the same as possible with both Intel and AMD processors, the sample size is too small to draw sweeping conclusions.

Given both laptops scored the same with Optimus disabled, it may be the case that the difference in processor is otherwise too insignificant to matter. It could also change based on the game, I chose CS:GO as it is known to be fairly processor dependent.

With Optimus enabled, it may be the case that an Intel + Optimus setup adds more overhead compared to AMD + Optimus. At least this is what this data points to, but again I need to test a lot more laptops before making such claims.

Regardless, I think these are still interesting results, and I will likely start testing this on all gaming laptops going forward. Once I have a large sample size it may be easier to identify trends.

We are a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for us to earn fees by linking to Amazon.com and affiliated sites.

20 Comments

XMG_gg

Nice! 🙂

Jose

So, if I could ask you for your opinion. Since it seems (at least in this test) that the difference is insignificant between Intel/AMD. Would a 10th gen intel 10875H in something like the new 2021 Advanced Razor be worth the purchase or would the new ASUS Zephyrus G15 be the better way to go?

Jarrod

I’d expect the G15 to do much better. The advanced has a 45W PL1 even in CPU only workloads which really holds it back, there’s info about it in my review of last year’s model, but it’s my understanding this hasn’t changed with Nvidia 3000.

Sanjay Jayan

Great post! I have a quick question, I recently pre ordered the Asus ROG Strix Scar 15, because of this whole Optimus issue, I’m really tempted to get an external monitor for better fps. What’s caused me even more confusion is, which port on the laptop provides best performance! There is usb c and hdmi. I think only usb c links directly to the 3080 gpu. If I buy a monitor which doesn’t have a USB C and instead I use a USB C to DP converted, will it affect my performance? Or would you recommend getting a monitor with USB C. Appreciate any comments you can provide. Thanks!

Jarrod

I will cover this in the review (working on it right now), but the HDMI port connects to the iGPU and Type-C to dGPU. My video on Wednesday next week will show the performance difference using an external monitor with Type-C can offer. Converting should be fine.

Sanjay

Great, thanks! Look forward to the review!

Bradley Gregory

Jarod, can we get a list of laptops currently available that allow us to disable optimus? I’ve purchased 2 laptops. 1 Asus strix scar with the 2070 super and second was a MSI GE75 with the 2080 super. I saw a dramatic improvement normally 30+ FPS when using an external display. I obviously do NOT want to do this. That’s why I want a laptop…. or I would just build a desktop if I’m doing that.

Jarrod

It’s not really something I can do as the only way I know is if I make it based on the tiny subset of machines that I’ve actually used.

Solitude

This is the measurement I wanted for a long time. Not just Intel vs AMD, but also between different laptops as well.

Quarks

Maybe I’m just too tired and my brain isn’t working right… but what’s the Y axis supposed to be? 😂

Jarrod

From the Nvidia software? The amount of tests taken. There were 100 shots done in CS:GO, so that is showing the distribution of them.

Tom

G’day Jarrod,

Firstly, a big fan of your content mate, everyone appreciates what you do for the community. I do need some help tho. ive got 3500$ AU to spend on a gaming laptop, that i predominatly play FPS games. what do you reccomend?

hard to grasp the new series with map p, max q, AMD/Intel etc. half the time i will have it plugged into a 144hz monitor. i just want best bang for buck for gaming performance! Thankyou mate !

Cheers

Tom

Jarrod

Hi Tom, Thanks! To get high FPS a laptop that lets you disable optimus would be preferable, unless you plan on connecting an external screen then that’s less of a requirement. With the external monitor it doesn’t much matter as pretty much all modern gaming laptops have at least one display output port that bypasses optimus and connects straight to the discrete graphics, boosting performance. Honestly for that price you can get a lot of different options, in terms of good price to performance ratio in Australia Metabox and Aftershock are generally better value compared to the bigger brands (eg ASUS/MSI etc) as they resell Clevo/Tongfang machines.

Ben

Great post! I wanted to ask whether the acer predator 300’s keyboard issue is fixed yet. Also, I am looking for a good gaming laptop for under $1400 do you have any suggestions?

Jarrod

I haven’t tested Helios 300 in a while, that said can’t recall keyboard issue? Maybe it’s just been too long for me. I’m interested to see how Legion 5 does, should be less than that.

Touee

Could you jarrod please sir next time test anylaptop gaming laptop for 4k resolution… I want to know that does rtx 3060 90w 100w 130w can handle 4k resolution for gaming … ThankYou sir i love your channel so muchhh

Touee

Especially rtx 3060 acer vs rtx 3060 legion 5 130w testing game in 4k … Try high ultra setting or medium setting

Touee

And rtx 3070 laptop in 4k as well sirr please

Martin

What about Advanced Optimus?

You wrote an article about that a while ago, it mentions the Legion 7i and 5i, I haven’t seen the 5i yet anywhere, but the 7i is available (although I believe you only got your hands on the Ryzen version?).

In theory they shouldnt see a real difference between enabled and disabled, maybe a tad higher overall (due to the dynamic switch causing “longer” physical signal ways)

I found a reddit that basically confirms the Legion 5 also have advanced optimus (working)

https://www.reddit.com/r/GamingLaptops/comments/lehe6h/list_of_laptops_with_advanced_optimus/

(There’s a screenshot of nvidia settings towards the end)

Jarrod

I have a 5i here, my Ryzen model (regular 5) has advanced optimus, but it’s the first laptop I’ve ever had with it – even after all this time they are only just starting to come to market.